Quashing a false report from a news outlet, Facebook came out explaining how well its AI is being developed and used for removing the hate speech on its platform.

In a blog post, Facebook’s Integrity VP said that success in handling and removing the hate speech on its platform is a multi-year journey, in which there continually developing and building solutions.

Facebook’s AI in Handling Hate Speech

Over the years, Facebook grew to be one of the highly controversial online platforms for sharing data. Apart from allegations like the company is selling user data, we’re seeing growing concerns of hate speech prevailing on the platform.

And The Wall Street Journal recently reported that Facebook’s AI isn’t doing much, or failed in handling them accordingly. Now, in a befitting reply to that article, Facebook’s Integrity VP Guy Rosen said in a blog post,

“We don’t want to see hate on our platform, nor do our users or advertisers, and we are transparent about our work to remove it.”

Further, “What these documents demonstrate is that our integrity work is a multi-year journey. While we will never be perfect, our teams continually work to develop our systems, identify issues and build solutions.”

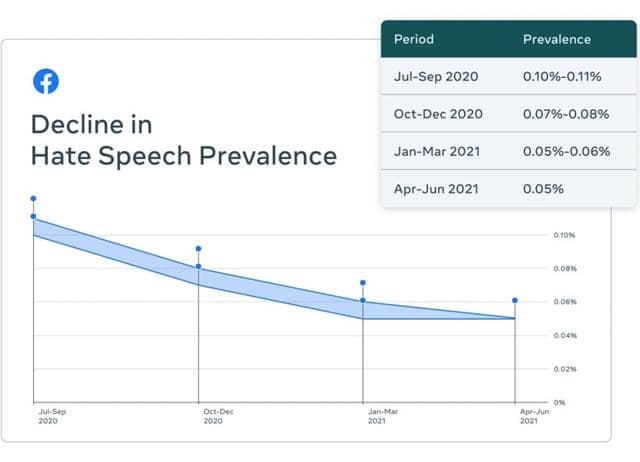

Rosen also affirmed on reducing the hate speech prevalence on Facebook by almost 50% in the last three quarters. And he further confronted that content removals on detection of hate speech aren’t the best way to determine their success.

But, what’s being slipped under their radar and is online on the platform should be considered unsuccessful. And in recent times, Facebook said it has reduced significantly, with now hate speech being viewed by only five people for every 10,000 on its platform.

Also, they’ve polished their AI to be more effective, as it’s now able to handle over 97% of hate speech cases and removals, up from 23.6% earlier, where the rest was determined by people.